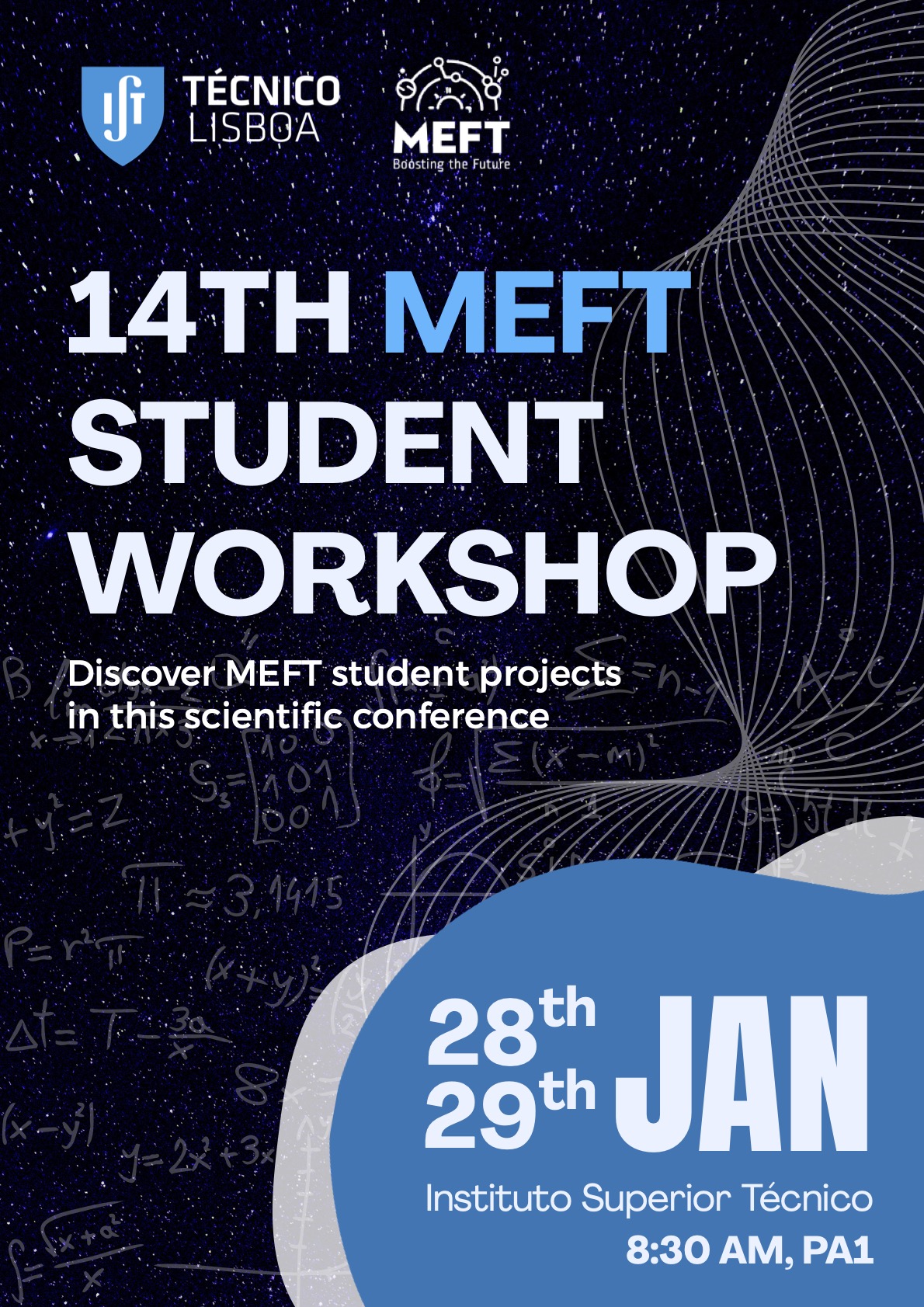

14th MEFT Student Workshop

Departamento de Matemática - PA1

Instituto Superior Técnico - Campus Alameda

The 14th MEFT Student Workshop is a 2-day conference during which Physics Engineering graduate students at Instituto Superior Técnico will share their projects with an audience of colleagues and professors.

Each student will prepare a 10-minute talk to present a video (4 minutes) and a short pitch (3 minutes), followed by 3 minutes of questions from the chairpersons and audience.

This takes the form of a typical scientific conference, with the objective of inspiring the students and kickstarting their scientific careers. This is not only symbolic, as it also represents the first step of their Master's Thesis.

-

-

08:30

Opening Session

Introduce the Workshop and share further information.

-

1

Measurement-Induced Phase Transition in Free Fermionic Systems

This project investigates the dynamics of Measurement-Induced Phase Transitions (MIPT) within non-interacting free-fermionic systems, exploring the competition between unitary evolution, which drives information scrambling, and stochastic projective measurements, which induce disentanglement. By employing the Fermionic Gaussian State (FGS) formalism and Peschel’s trick, the simulation tracks the time-evolution of the system's correlation matrix with polynomial complexity $\mathcal{O}(L^{3})$, avoiding the exponential cost associated with generic quantum states.

The study focuses on a tight-binding Hamiltonian applied to two distinct topologies: a one-dimensional periodic chain and a two-dimensional torus. To characterize the phase transition, three primary observables were calculated: von Neumann Entanglement Entropy, Inverse Participation Ratio (IPR), and Summed Point-to-Point Mutual Information.

The results demonstrate a fundamental dependence on system dimensionality. Finite-size scaling analysis reveals that the 1D system is fragile to measurements, exhibiting no stable volume-law phase in the thermodynamic limit, effectively yielding a critical probability of $p_{c}=0$. In contrast, the 2D system sustains a robust entangled phase against weak monitoring, with a phase transition occurring at a finite critical measurement probability in the interval $0.2 < p_{c} < 0.3$. These findings validate the developed computational framework for simulating measurement-induced dynamics in free fermions. -

2

Non-equilibrium dynamics of dissipative strongly-correlated quantum matter

How interacting quantum systems relax toward equilibrium is a fundamental question in modern physics, especially when such systems are influenced by their surroundings. While isolated many-body systems can thermalize through their own interactions, realistic systems are often coupled to environments that introduce memory effects and modify their dynamics. Understanding how strong interactions and environmental coupling compete is crucial for both fundamental insights and emerging quantum technologies.

In this work, we investigate these questions using an open Sachdev–Ye–Kitaev (SYK) model coupled to a fermionic bath with a pseudogapped density of states. Using the Schwinger–Keldysh path-integral formalism and the large-$N$ limit, we derive self-consistent Schwinger–Dyson equations governing the system’s dynamics. Solving these equations, we identify distinct relaxation regimes: pseudogapped environments lead to universal algebraic relaxation, while flat or strongly suppressed baths produce exponential decay controlled by dissipation or intrinsic many-body chaos. These results demonstrate that non-Markovian dissipation can qualitatively alter relaxation beyond the Lindblad description.

-

3

Ion Implantation in III-Nitride Ferroelectric Ternaries

The recent observation of ferroelectricity in group III-nitride semiconductors containing rare earth, such as AlScN and AlYN, has opened the doors to a new generation of electronic devices. However, a few obstacles remain, such as the growth of quality crystals with the ferroelectric property and mitigating the leakage currents associated with nitrogen vacancies. In this work, the crystal quality and structure of AlScN and AlYN samples will be characterized with x-ray diffraction (XRD) and Rutherford backscattering spectrometry (RBS). Furthermore, for the first time, these samples were implanted with nitrogen ions, in an effort to reduce the leakage currents. The implanted samples are subsequently thermally annealed and characterized by the same techniques. Preliminary results on the implantation and structural characterization of two samples will be presented.

-

4

Thin Film Deposition: Layer by Layer until the Sensor

One of the focuses of this project is the deposition and optimization of magnetic sensors, in particular, spin valves, that are based on the Giant Magnetoresistance (GMR). These are fundamental in detecting magnetic fields, with a range of applications from industrial detection to advanced biotechnology. They rely on the orientation of the magnetic moments between the free layer and the pinned layer. Controlling such things as the spacer (a layer between the two layers), the exchange bias, and the ferromagnetic coupling are relevant to achieving a sensitive magnetoresistive profile.

Another focus of this study is the influence of the thin layer-by-layer film deposition on the sensor’s magnetic response and sensitivity, while taking into consideration aspects like the quality of the interfaces and the magnetic stability.

The deposition method chosen was the magnetron sputtering on the Nordiko 2000 at INESC MN. This machine allows the sequential deposition of different films, allowing the change in the deposition rate of each material. After this procedure, the samples went through a magnetic annealing process to help define the sensor’s anisotropy and stabilize its performance.

First spin valves confirmed the alteration of the resistance under an external field, confirming that the sensors are sensitive to it. Through the systematic optimization of the thicknesses of various layers and the use of the magnetic annealing process, it was possible to double the magnetoresistance (MR) signal compared to the initial configuration. But this value still needs to be improved because it didn’t reach what is considered to be a good signal (MR>10%). To achieve this, in future work, is planned to improve the vacuum conditions on the Nordiko 2000 and optimize the deposition conditions. Additionally, parameters such as the use of a nano-oxide layer (NOL) and the optimization of the target-substrate distance can be explored to increase the magnetoresistance and magnetic anisotropy of the sensor.

-

5

Sn doped Gallium Oxide Thin-Film Field-Effect Transistors for UV Sensing

Gallium oxide (Ga₂O₃) is an ultra-wide bandgap semiconductor with strong potential for solar-blind UV photodetection. In this work, the growth of Sn-doped Ga₂O₃ thin films is investigated to optimize their use as channel layers for enhancement-mode, bottom-gate bottom-contact thin-film (BGBC) field-effect transistors (FETs) aimed at UV sensing applications. Thin films are deposited primarily by RF magnetron co-sputtering under varying growth conditions to control conductivity, with ion implantation explored as an alternative doping method. The influence of different substrates and post-deposition annealing on the structural, optical, and electrical properties of the films is evaluated. Characterization is performed using profilometry, Rutherford Backscattering Spectrometry, X-ray diffraction, scanning electron microscopy, optical absorbance, and electrical measurements. The most promising films will be integrated into pre-fabricated FET templates and assessed for transistor operation and UV detection performance. This work seeks to establish optimized growth and doping conditions for scalable Ga₂O₃-based solar-blind UV FET devices.

-

6

Ultrasensitive Magnetoresistive Sensors

The design of an ultrasensitive magnetoresistive sensor is a compromise between detectivity, sensitivity, linearity, hysteresis, device footprint, and power consumption. This work focuses on exploring MTJs, which provide a scalable and low-cost solution for magnetic sensing at room temperature, with strong potential for high spatial resolution and applications where proximity to the field is important. Analyzing how design choices impact the final performance and feasibility of the end device showed that improving detectivity can be achieved either by increasing sensitivity (e.g., through flux concentrators) or by reducing noise (e.g., parallel sensor architectures), but these approaches also introduce disadvantages, such as larger device footprints and fabrication complexity. The results indicate that meeting the target specification is most realistically approached through a combination of 3D arrays of small junctions, carefully chosen biasing, and magnetic flux concentrators to boost detectivity while maintaining a linear, non-hysteretic response.

-

7

Gallium Oxide pn heterojunctions using RF-sputtering on p-type substrates

Gallium oxide (Ga$_2$O$_3$) is an ultra-wide bandgap semiconductor with strong promise for high-voltage power devices and solar-blind UV photodetectors, but its practical deployment is constrained by low thermal conductivity and, most critically, the lack of reliable p-type doping. A common route around this limitation is heterointegration, where n-type Ga$_2$O$_3$ is combined with established p-type semiconductors to form anisotype pn heterojunctions.

This work combines a focused review of Ga$_2$O$_3$ material drivers with a preliminary fabrication study of sputtered Ga$_2$O$_3$ thin films and high work function Ni/Au contacts deposited on p-Si, p-GaN, and p-diamond using RF and DC sputtering. SEM revealed smooth films on Si and GaN, while films on diamond replicated the substrate roughness. RBS with SIMNRA fitting yielded near-expected Ga:O ratios on smooth substrates and thicknesses in the 150 to 200 nm range, but indicated limitations for rough substrates. Electrically, Ni/Au behaved ohmically on diamond but remained highly resistive on p-GaN, motivating post-metallization annealing. Future work will include XRD phase identification, improved RBS modeling, PIXE impurity quantification, systematic annealing sweeps, and photoconductivity measurements under UV illumination.

-

8

Magnetoresistive devices for industrial applications: improvement of thermal robustness

Tunnel magnetoresistance (TMR) sensors are promising for industrial applications due to their high sensitivity and large output signals, but reliable operation at elevated temperatures remains challenging. This work investigates the thermal robustness of TMR sensors by comparing three multilayer stacks with different antiferromagnetic layer thicknesses, tunneling barrier thicknesses, sensing multilayers and deposition techniques. Temperature-dependent magnetoresistance measurements were used to evaluate the evolution of TMR, sensitivity, resistance-area product, and reference system stability. In addition, the behavior of the cylindrical sensing layer was studied through the analysis of temperature-dependent vortex nucleation and annihilation fields. The results show a systematic degradation of performance with temperature, strongly influenced by the blocking temperature and loss of exchange bias. Re-annealing experiments demonstrate that this degradation is largely reversible, indicating that loss of exchange bias is the dominant mechanism limiting performance.

-

9

On Flavour Invariants of the N Higgs Doublet Model

The Standard Model (SM) of particles physics is the best description of Nature at the most fundamental level we currently have. Nevertheless, it does not account for the dark matter content of the Universe, it does not explain the matter anti matter asymmetry observed, and, in its simplest form, does not account for neutrino masses. As such, and to try and solve some of these problems, one solution may be to extend the SM with new particles and interactions. The N Higgs Doublet Model (NHDM) is one example of such extensions.

In the most general NHDM, there are flavour changing neutral couplings between quarks and scalars in its flavour sector. These are known from experiment to be very small. So far, no systematic analysis of flavour physics has been developed for the NHDM. In this work, this will be done, by characterising the flavour sector of the NHDM in terms of eigenvalue and mixing matrices akin to the mass matrices and CKM matrix of the SM. Furthermore, a description of the flavour sector of the NHDM will be provided in terms of flavour invariants, quantities that don't depend on the basis for the quarks.

-

10

Multi-Parton Systems in a QCD medium

Quantum Chromodynamics (QCD) is the theory of the strong interaction, responsible for binding quarks and gluons inside hadrons. In ultra-relativistic heavy-ion collisions, such as those at the LHC, these constituents are liberated to form a hot, dense phase of deconfined matter — the Quark-Gluon Plasma (QGP). Jets, collimated sprays of hadrons produced by hard scattered partons, interact with the QGP, a phenomenon known as jet quenching. A key open question is how these in-medium interactions unfold in space and time, and how they modify the internal structure of parton showers.

Among the variables proposed to describe this evolution, formation time plays a central role. It provides a dynamical scale for when partons effectively decouple from their parent emitter — a concept that becomes even richer in a medium where multiple emissions and interference effects coexist.

This thesis will analytically study double soft-gluon emission in a QCD medium, focusing on the interference pattern between subsequent emissions. The aim is to identify under which conditions the radiation pattern factorises and when quantum coherence prevents a simple interpretation in terms of independent emissions. This work will contribute a crucial building block to the development of space-time-resolved parton showers, and it will lay the theoretical groundwork for future studies of in-medium QCD jets.

-

11

Expanding the Physics Reach of the ATLAS Detector with Fast Machine-Learning

The Standard Model of Particle Physics (SM) is a very successful and precise description of particle interactions, yet it leaves unexplained several observable phenomena, such as the matter-antimatter asymmetry or dark matter. Therefore, after the discovery of the Higgs boson by the ATLAS and CMS experiments at the Large Hadron Collider (LHC), searching for new physics beyond the SM (BSM) has been one of the main focus of the LHC experiments.

In its next stage, the High-Luminosity LHC (HL-LHC), to start running in 2030, will present new challenges in trigger and data acquisition, due to its much larger collisions rates. The ATLAS detector relies on real-time event filtering systems to process millions of proton-proton collision events and decide whether to discard or keep them for further processing. This decision is currently reached within a few microseconds by a hardware-based trigger. In order to cope with the higher collision rate expected at the HL-LHC, the ATLAS trigger system will be upgraded to allow for a first decision latency of up to 10 microseconds.

It is crucial that the trigger decision is as unbiased as possible, to avoid reducing or eliminating completely any sensitivity to potential (and even unexpected) BSM signals. Therefore, taking advantage of the increased hardware trigger latency, complex algorithms that rely on fast machine-learning can be used to reach the trigger decision. In this work, we will explore and develop dedicated ML algorithms that can perform fast background rejection in favor of a model-agnostic signal detection, e.g. via unsupervised learning. We will focus on signal selection based on large-radius jets, developing a strategy that is able to discriminate hadronic boosted objects, or other signs of new physics in hadronic final states, from the QCD background jets.

-

12

Constraints on multi-scalar models

The Standard Model of Particle Physics is one of the most successful theories ever created. Nevertheless, it fails to account for several phenomena observed in nature, making extensions of the Standard Model necessary.

In this work, we provide a complete description of perturbative unitarity bounds for the gauge-scalar sectors of models with extra $SU(2)$ doublets, neutral singlets, or charged singlet scalars. These models appear frequently in Beyond the Standard Model theories and are particularly common in scenarios addressing Dark Matter. We present a classification together with a minimal set of scattering matrices that capture all the relevant information. We also developed a Mathematica implementation of our results, called BounDS.

-

13

Energy Spectrum Reconstruction of Air-Shower Components with a Multi-Hybrid Detector Station

Ultra-high-energy cosmic rays (UHECRs) initiate extensive air showers (EAS) through their interactions with molecules in the Earth’s atmosphere, producing massive cascades of secondary particles. Measurements of these showers enable the study of both the properties of the primary cosmic rays and the high-energy hadronic interactions that govern shower development. However, due to the intrinsic limitations of air-shower experiments, the energy spectrum of air-shower components at ground level has not yet been measured.

This thesis investigates the feasibility of reconstructing the energy spectra of different shower components by employing a multi-hybrid detector station composed of a surface scintillator detector (SSD), a water Cherenkov detector (WCD), and resistive plate chambers (RPCs). Additionally, we evaluate the performance of the MARTA prototype station, which integrates a WCD and four RPCs and is deployed within the $750\,$m-spaced Surface Detector (SD) array of the Pierre Auger Observatory. Bridging the gap between simulations and real data constitutes a key step towards the development of a complete end-to-end analysis framework for energy spectrum reconstruction.

We establish the proof-of-concept by demonstrating that regions in the combined detector signal space can be mapped to corresponding regions in the spectral space. Future work will focus on developing concrete transformation methods to enable the full reconstruction of the original energy spectra.Speaker: Guilherme Neves (IST, LIP) -

14

Exploring jet substructure in public LHC data

This work establishes a reproducible workflow to study jet substructure using public CMS Open Data from the LHC. Using the JetHT Run2016G MiniAOD dataset, we extract AK4 (anti-$k_t$, $R=0.4$) jet kinematics and constituent-level information and focus on a hard regime ($p_{T,\text{jet}} > 200~\mathrm{GeV}$) to probe perturbative QCD with reduced non-perturbative and pileup sensitivity. We reconstruct the jet branching history by re-clustering constituents with the Cambridge--Aachen algorithm and performing Lund declustering to build primary and secondary Lund jet planes. The resulting average Lund-plane densities show the expected kinematic triangular structure and radiation patterns, while SoftDrop grooming ($\beta=0$, $z_\text{cut}=0.1$) yields the anticipated migration from soft wide-angle activity toward harder, more collinear emissions. Building on this framework, we explore two observables sensitive to collinear spin correlations. The Lund-based azimuthal angle $\Delta\psi_{12}$ between the planes of the hardest primary and secondary branchings, which exhibits the characteristic $\cos(2\Delta\psi_{12})$ periodicity with a negative modulation and a hadron-collision adaptation of the three-point energy correlator (EEEC), whose $\Delta\psi$ distribution follows a $\cos(2\Delta\psi)$-like modulation with positive sign over full angular integration but changes markedly under restricted angular ranges, indicating non-trivial dependence on integration choices. These results demonstrate that CMS Open Data enables meaningful, substructure-level studies of QCD spin-interference effects, while motivating further validation of angle definitions, comparisons to simulation and detector-level effects, optimized EEEC computation, and flavour-tagged measurements.

-

12:30

Lunch Break

-

15

The Effect of Heterogeneous Heating on the Mineralogical Composition and on the Luminescence Dosimetry of Ceramic Materials

Abstract

Luminescence dating estimates the time elapsed since a sample (e.g. sediment or ceramic) was last exposed to heat or sunlight by measuring its paleodose or absorbed dose and dose rate [1]. In the case of ceramics, it is also possible to infer the maximum temperature achieved during their production process [2]. Ceramic sherds collected on a surface survey in an unexcavated kiln area of Villa Cardílio, a Roman amphora production center in Torres Novas, were analyzed as a study case [3]. The study aims to explore past firing techniques, how the quartz grains extracted from these ceramics act as a luminescence dosimeter, and the influence of heating process in ceramic mineralogy and in luminescence signals used for dating.

X-ray diffraction (XRD) was used to identify and semi-quantify the mineralogical assemblage of ceramic paste. Thermally/optically stimulated luminescence (TL/OSL) dosimetry on coarse quartz grains (160-250 µm) from ceramics were used to determine the absorbed dose and explore quartz dosimetric properties performed under different conditions.

According to the the semi-quantitative analysis and the OSL results, sample AB-5 appears to have been exposed to higher temperatures and longer heating durations than the other six samples. The TL measurements were performed using three optical filters (Hoya-340, BG3/BG39 and Corning 7-59). The results show that the Corning 7-59 filter yields the highest TL signal intensity. Future prospects include a systematic comparison of methods and conditions to conclude which are the most suitable to determine the absorbed dose, as well as applying this methodological framework to samples with different chronologies and production technologies.

References

[1] M. J. Aitken, “Archaeological dating using physical phenomena,” Reports on Progress in Physics, vol. 62, p. 1333–1376, Aug. 1999.

[2] J. R. Vidal-Romani et al., “Tl estimation of ages of pottery fragments recovered from granite caves in the nw coast of spain,” Cuadernos Laboratoiro Xeoloxico de Laxe, vol. 37, pp. 73–88, 07 2013.

[3] V. Filipe et al., “Villa Cardílio (Torres Novas, Portugal), um novo centro produtor de ânforas romanas no Médio Vale do Tejo,” 2025. -

16

Towards the observation of prebiotic molecules in Titan's atmosphere

Titan, Saturn's largest moon, hosts a dense nitrogen-methane atmosphere where complex organic chemistry produces molecules of potential prebiotic significance. Photochemical models predict the presence of reactive radical intermediates, such as CH, C$_2$, and CN, that play crucial roles in forming more complex organic compounds, yet these species remain undetected. Here we present ultra-high-resolution visible spectroscopic observations of Titan obtained with VLT-ESPRESSO during December 2024, targeting these elusive photochemical intermediates. We developed a comprehensive data reduction pipeline to distinguish Titan's intrinsic absorption features and successfully validated the detection of multiple CH$_4$ absorption bands. We then generated synthetic transmittance spectra for CH, C$_2$, and CN and performed $\chi^2$ minimisation analyses across varying atmospheric parameters. While we identified spectral regions potentially consistent with absorption of these molecules, the features lacked coherent alignment with modelled band structures and fell within regions dominated by solar and telluric contamination and methane absorption, preventing unambiguous attribution. We conclude that visible wavelengths, though accessible to ESPRESSO's exceptional resolution, are not optimal for detecting these radicals on Titan. Future observations should target infrared wavelengths where rovibrational transitions of most molecular species of atmospheric interest are stronger, less blended with solar features, and more amenable to detection.

-

17

Exploring the mechanisms of visual place learning in the fruit fly

It has been demonstrated that Drosophila melanogaster and other insects are capable of associating locations in space with rewards and use this knowledge later on, but the concrete mechanisms underlying this ability are not entirely understood. This project aims to shed light into the specific sensory and motor features underlying visual place learning in insects.

Because of its many experimental advantages and complex navigational behaviors, which are replicable in artificial and controlled lab conditions, the fruit fly Drosophila melanogaster is a great model for studying visual place learning. In addition, due to the high conservation of navigational brain structures across arthropods and vertebrates, the findings generated in this project can be extended to other insects and animal systems.

We will look at the fly’s exploratory behavior in a featureless world and in the presence of different visual landmarks projected on the floor instead of vertical visual panoramas. Next, we will introduce a fixed cool spot in a heated arena as a reward, marked by an exactly overlapping landmark projection. Flies will be trained in this setup and then tested without any reward. While walking flies explore these virtual worlds, we will quantitatively analyze the movement strategies they take.

Together, these experiments will allow us to understand 1) whether flies can learn to associate projected landmarks on the floor with a reward, 2) how they may use this visual-based learning to find a reward in a quicker or more efficient way via changes in their navigational strategy, 3) which visual features are most important for place memory formation, 4) whether flies show local search behavior in our setup and 5) how this search behavior interacts with the visual memory obtained during training.

The projected findings of the experimental part of this project will be significant for understanding more about the navigational mechanisms of the fruit fly and insects in general when it comes to place learning and exploratory/search behaviors, with possible applications for bio-inspired robotics.Speaker: João Rodrigues -

18

Development of a Wafer Level Process Control Tool for Performance Prediction of Magnetoresistive Sensors

Magnetoresistive technologies, such as Tunneling Magnetoresistance, are essential in modern sensing due to their high sensitivity and low power consuption. However, these sensors face performance variability and higher production costs compared to traditional Hall sensors, primarily due to manufacturing-induced non-uniformities across the wafer. This project, conducted at INESC-MN, details the development of two specialized process control tools, Heimdall and Brokk, designed to predict and monitor these variations early in the fabrication process.

Heimdall is a C++ command-line interface created to monitor in-line deposition parameters. It analyzes operational logs from Nordiko deposition systems to evaluate process stability, track target usage, and identify anomalies or trends that could lead to device failure.

Brokk is a Python-based graphical user interface developed to visualize spatial non-uniformities across a wafer using resistivity mapping and profilometry data. A key feature of Brokk is its ability to simulate and predict TMR sensor transfer curves at any point on the wafer before full fabrication is complete.

Together, these tools establish a framework for wafer-level performance prediction and early fault detection. By identifying problematic wafers early, this framework contributes to improved yield, resource preservation, and increased manufacturing efficiency for TMR sensors.

-

19

Ion acceleration from intense laser-plasma interactions

Plasma-based particle accelerators offer the prospect of drastically reducing the size and cost of accelerator facilities, improving accessibility while providing a promising route toward next-generation machines at the energy frontier. Because plasmas are already ionized (consisting of free electrons and ions) they can sustain accelerating fields several orders of magnitude higher than those achievable in conventional solid-state radio-frequency structures. This makes plasma accelerators particularly attractive for the development of compact and tunable ion sources. In this context, proton and ion beams are of special interest due to their highly localized energy deposition (Bragg peak), which underpins proton therapy and other applications requiring precise dose delivery. A promising approach to achieving such compact sources is laser-driven ion acceleration from solid-density targets.

In this work, we investigate radiation pressure acceleration (RPA) in overdense plasmas, where an intense laser pulse transfers its momentum to the target through a charge-separation layer driven by radiation pressure. We investigate the light-sail regime of RPA using 1D, fully relativistic particle-in-cell (PIC) simulations performed with the OSIRIS PIC code. The simulations resolve the main stages of the interaction: target compression, formation of the charge-separation field, coherent acceleration of a dense ion bunch, and its subsequent evolution. By confronting analytical predictions with numerical results, we show that the fundamental physics of RPA is accurately captured within the kinetic PIC description. -

20

Analysis of perpendicular mean flows and fluctuations for various magnetic field configurations and heating methods

Research on how to extract energy from nuclear fusion reactions has been active since the last century and comes hand in hand with our growing need for a clean and sustainable source of energy. At the high temperatures required, the system forms a plasma, which needs to be confined so that high densities and high temperatures are maintained. One way we can do so is through magnetic fields, with the tokamak and the stellarator being the devices with the best performance in magnetic confinement fusion.

Energy losses remain the biggest challenge in fusion research, with their main contribution coming from turbulent transport, the disordered flow of the plasma. Although a full understanding of turbulence dynamics in fusion plasmas is still missing, it’s known that sheared profiles in the plasma velocity provide a way of suppressing turbulence, by stretching and breaking apart fluctuating structures (vortices) into smaller structures.

Research work for the development of this thesis will be performed at the Wendelstein 7-X stellarator. Fusion plasmas subject to various magnetic field configurations and heating methods will be studied and the Doppler reflectometry diagnostic technique will be central to the work, since it provides a measurement on both the plasma’s velocity and density fluctuation level at various probed regions in the device. With this in mind, research will further explore the interaction between turbulence and sheared plasma flows, which play a crucial role in the development of optimized fusion reactors. -

21

Modelling the interaction between low-temperature plasmas and separation membranes

This project explores the potential of low-temperature plasmas (LTPs) integrated with separation membranes for the efficient conversion of CO₂ into useful products. The study highlights the advantages of plasma-assisted processes over conventional approaches, such as thermocatalytic and electrochemical techniques, particularly the ability of LTPs to selectively dissociate CO₂ molecules.

Focusing on the modelling of plasma–membrane interactions, the research aims to develop comprehensive fluid models capable of predicting and optimizing both plasma behavior and membrane separation performance. The results obtained will be compared with experimental measurements from plasma–membrane systems currently under investigation in laboratories worldwide, allowing the identification of the most suitable physical models and approximations to describe these coupled systems.

Ultimately, this work aims to provide a foundation for the design of energy-efficient and scalable plasma–membrane technologies that can contribute to ongoing efforts towards a net-zero carbon economy.

-

15:15

Coffee Break

-

22

Hybrid Electron Acceleration in Plasma-Based Accelerators

Direct laser acceleration (DLA) is a leading mechanism of generating high-energy electron beams in underdense plasma with high total charge. Multi-petawatt facilities enable acceleration of hundreds of nC to energies of several GeV. In this study we employ 3PW laser pulses to explore how varying the laser pulse duration influences the interplay between DLA and Laser wakefield acceleration (LWFA). By systematically adjusting the laser pulse duration, we investigate its effects on the properties of the accelerated electrons. Our research reveals that as the pulse duration becomes comparable to the plasma wavelength, a unique regime emerges where both DLA and LWFA can accelerate electrons simultaneously. In this hybrid regime, the interaction between the laser and plasma enables more effective injection and acceleration of electrons, resulting in higher energy gain and improved beam quality. We demonstrate the importance of laser pulse duration for controlling the acceleration process within the advanced laser-driven electron sources.

-

23

Conditions for Dark Matter Ignition of White Dwarf Stars

Dark matter constitutes approximately 84\% of the total matter in the universe, yet despite decades of experimental effort, no direct detection has been achieved. We focus on Weakly Interacting Massive Particles (WIMPs) as a dark matter candidate. Their extremely weak interactions with ordinary matter place them beyond the reach of current detectors here on Earth. White dwarfs offer possible alternative detection method: their strong gravitational wells can capture and accumulate WIMPs over billions of years, potentially reaching densities where self-annihilation produces observable heating effects. This thesis investigates the conditions under which WIMP accumulation in white dwarf cores can trigger either catastrophic thermonuclear ignition (Type Ia supernovae occurring before the Chandrasekhar limit is reached) or detectable thermal anomalies in cooling curves. A theoretical framework is developed encompassing WIMP capture rates, thermalization timescales, and annihilation heating in degenerate stellar cores across a range of WIMP masses ($10^5-10^8$ GeV) and scattering cross-sections ($10^{-45}-10^{-40}\,\, \text{cm}^2$). The predicted observational signatures are deficits of old white dwarfs in high dark matter density regions and anomalously luminous white dwarfs that could provide indirect evidence for dark matter.

-

24

Time-Skipping Stellar Evolution: A Machine-Learning Approach to Computational Astrophysics

This work explores the use of machine learning to emulate stellar evolution codes, which are used to predict complete stellar tracks and detailed global and internal properties throughout a star’s lifetime. Although these codes are essential tools in astrophysical research, they are computationally expensive and require significant manual effort. Alternative approaches, such as analytic fitting or interpolation, are poorly automated and introduce substantial systematic errors. This study focuses on the Red Giant Branch (RGB) phase, a particularly complex and time-consuming stage of stellar evolution to model. We evaluate classical machine learning and deep learning models trained on pre-computed stellar track grids to efficiently reproduce and time-skip the RGB phase. This approach aims to achieve improved computational efficiency compared to existing methods and provide physical insight into the key parameters governing stellar evolution.

-

25

Critical Gravitational Collapse with a Charged Scalar Field

In recent groundbreaking work, it was shown that there is a regime of critical collapse where the critical solution is an extremal Reissner-Nordström black hole. It was also conjectured that such critical phenomena would occur for the spherically symmetric Einstein-Maxwell-charged scalar field system. In this work, we report on early developments to test this conjecture. We perform the 3+1 decomposition for the Maxwell equations as well as the massless Klein-Gordon equation. We implement the evolution of the vector potential ${}^{(3)}{A}_i$ in the existing numerical relativity code BAMPS, which introduces the curl constraint $\mathcal{G}_A^i = (D\times A)^i - B^i$. To check for the correctness of the code, we simulate an electromagnetic dipole in flat background and Reissner-Nordström black hole in fixed background. The first simulation was successful, showing exponential convergence. However, the latter one showed exponential growth of $\mathcal{G}_A^i$ with resolution and in time. This suggests that constraint damping for $\mathcal{G}_A^i$ needs to be implemented in the future.

-

26

Dynamics of Elastic Bodies in General Relativity

We analyze the dynamics of extended bodies in an expanding universe, in the context of General Relativity. The objects of study are one-dimensional string loops and two-dimensional spherical membranes, both elastic and rigid, with a Schwarzschild - de Sitter metric. We first consider them as test bodies via parametrization and secondly as hypersurfaces that backreact on the metric. We characterize their motion and determine under which conditions this motion is bounded. We conclude that in both approaches there are five different regimes of motion, and that for small initial radius and mass the motion of the elastic body is bounded. Expansion occurs only if the radius is on the order of a third to half the cosmological radius.

-

27

Superradiance in Einstein-Gauss-Bonnet gravity with Born-Infeld electrodynamics

In string theory, a natural low energy effective action is

the Einstein-Gauss-Bonnet gravity, in which a Gauss-Bonnet term

accompanies the usual Einstein-Hilbert action. Born-Infeld

electrodynamics arises when considering an Abelian gauge field coupled

to the open bosonic string or open superstring. We will analyze the

superradiant behavior of a massive, charged scalar field coupled to a

spherically symmetric, charged black hole solution within this modified

theory of gravity, and compare with the classical Reissner-Nordström

solution. To do so, we will compute the cross-section and the

quasinormal-modes (QNMs) spectrum, analyzing how this modification of

Einsteinian gravity affects the possible observables. -

28

Spatiotemporal light springs with frequency chirp

This project investigated spatiotemporal light springs (LS)—ultrashort, helical space--time wave packets generated by engineering a topological--spectral correlation in which the orbital angular momentum (OAM) varies with optical frequency. Building on a mode-by-mode construction of LS using Bessel--Gauss beams, the project expands LS tunability by introducing controlled spectral-phase (chirp) manipulation as an additional, experimentally relevant degree of freedom. A MATLAB simulation framework is developed in which the LS field is synthesized by coherently summing frequency modes with prescribed OAM and spectral phase, enabling full 3D visualization ($x$,$y$,$t$) of intensity isosurfaces and instantaneous-frequency maps. To reach the temporal sampling needed for frequency-resolved diagnostics, the code is substantially optimized by precomputing spatial modes and separating spatial and temporal loops, reducing computational cost from $O(N_{\text{time}}\times N_{\text{modes}})$ to $O(N_{\text{time}}+N_{\text{modes}})$, and further accelerated via GPU parallelization.

A key result is that treating spectral phase as sectorized (aligned with the discrete OAM/frequency sampling used in LS generation) makes chirp-like manipulation predictable and intuitive. Two independent controls are identified: step control, which reshapes the rotational structure and enables Angular Delay and Angular Delay Dispersion (including multi-helix "orbital dispersion" states), and slope control, which governs temporal placement and stretching via Longitudinal Delay and Longitudinal Delay Dispersion, including time-separated sub-LS synthesis through mode grouping. Finally, an LS platform incorporating supercontinuum broadening to access ultrabroadband regimes was developed in supporting laboratory work, motivating future applications in tailored ultrafast excitation and spectroscopy.

-

29

Experimental Techniques for Measuring MTF in Large-Dimension Optics

In this project, techniques for the measurement of the Modulation Transfer Function (MTF) in large-dimension optical systems will be assessed to evaluate image-quality characteristics in telescope and CCD/CMOS cameras, focusing especially on Earth-observation systems. The evaluated techniques may be used to perform acceptance tests, verifications and calibrations of telescopes intended as payloads in space missions. This work proposes a signal processing pipeline to evaluate the MTF from data acquired in the experimental phase, for which simulated test images are generated to support the calibration of the pipeline. Furthermore, this work supports the definition of an experimental setup to be implemented in subsequent phases and the development of software tools for estimating optical performance in captured images in accordance with ISO 12233. The proposed processing pipeline for MTF estimation is presented in detail, along with a validation strategy based on an additional contrast measurement technique using sinusoidal Siemens star targets.

-

30

Automated positioning and calibration procedures for improved imaging performance of a novel small animal in-beam PET scanner

Proton therapy offers superior dose conformity compared to conventional radiotherapy but the full clinical exploitation of its potential healthy-tissue sparing benefits remains limited by uncertainties in beam range within the patient. Positron Emission Tomography (PET)--based verification provides a promising in vivo method to monitor proton range by imaging irradiation-induced $\beta^+$ activity. This thesis aims to enhance the flexibility and autonomous operation of the SIRMIO in-beam small-animal PET scanner by equipping it with high-precision motorized stages and a TANGO-based control system, enabling its integration into diverse irradiation setups. In parallel, novel calibration and sensitivity characterization strategies will be developed, to allow for smaller acquisition times of these procedures. Synthetic phantom-based denoising approaches will also be developed to improve quantitative accuracy under low-count conditions.

-

31

Quantum Simulation of Particles in External Fields

Quantum computing in the last few years has seen fast growth, making it one of the most active research areas of today. This thesis investigates one of their applications, the simulation of the dynamics of charged particles in strong laser fields in the Strong Field Quantum Electrodynamics (SFQED) regime. Quantum algorithms that decompose the Time Evolution Operator into discrete quantum gates using both the Lie-Trotter product formula and Quantum Fourier Transforms, enabling the simulation of particle interactions in diverse external fields. The use of Walsh Functions enables the implementation of time-dependent vector potentials, more specifically Sauter pulses and oscillatory plane waves, on a quantum circuit, overcoming the challenge of representing continuous functions like cosines and hyperbolic tangents on digital quantum computers. The proposed algorithms were validated through 2D simulations comparing Classical and Quantum Trotterization, demonstrating that the quantum approach accurately reproduces expected physical behaviors, such as wave packet spreading and oscillatory drift, matching theoretical calculations. Future work aims to extend these methods to full plane wave potentials without dipole approximations, refine error analysis, and implement the algorithms on actual quantum hardware.

-

08:30

-

-

08:30

Opening Session

Introduce the Workshop and share further information.

-

32

Probing Disorder Effects in Topological Materials via Non-Linear Hall Response

Topology and geometry have recently emerged as pillars for exotic phenomena in condensed matter physics. From topological insulators and superconductors to quantum Hall states and fractional statistics, these phenomena have the potential to revolutionize technology in the areas of spintronics, low-energy electronics and quantum computing. The non-linear Hall effect (NLHE) is an example of such an exotic phenomena. Without a magnetic field, it is possible to generate a Hall response in samples with non-trivial quantum geometry. In this project, the influence of granular disorder on the NLHE in the three-dimensional topological insulator Bi2Te3 is studied through a combination of experimental measurement and theoretical analysis. More precisely, Bi2Te3 samples with varying grain size will be fabricated and their Hall responses measured, which are then to be analyzed through the Boltzmann transport framework. This analysis aims to understand the interplay between disorder strength and the quantum geometric properties of Bi2Te3, in particular, its Berry curvature dipole, and determine which contribution to the NLHE dominates, the disorder-induced contributions or the quantum geometric contribution. This project will contribute to the growing understanding of topology and quantum geometry in disordered quantum matter, which is indispensable for the development of realistic technologies.

-

33

Structural Characterization of Argon Ion Implantation Effects in 4H-SiC for Advanced Electronic Applications

This project aims to clarify the influence of polytype on irradiation-induced damage buildup and recovery mechanisms through a comparative study of 4H-SiC and 6H-SiC under identical implantation and annealing conditions. Samples will be irradiated at room temperature with 300 keV argon ions at fluences ranging from $1 \times 10^{13}$ to $1 \times 10^{15}$ ions/cm$^{2}$, with implantation conditions chosen based on SRIM simulations and a literature review. Post-implantation annealing will be performed at selected temperatures up to 1000 $^\circ$C. Both annealed and non-annealed samples will be characterized using X-ray diffraction (XRD) and Rutherford backscattering spectroscopy in channeling mode (RBS/C). Strain and disorder depth distributions will be extracted from MROX simulations, enabling a direct comparison between simulated and experimental results. Preliminary measurements on non-irradiated samples confirm the high crystalline quality and structural consistency of the initial 4H-SiC samples.

-

34

Magneto-ionic control of chiral magnetic textures for neuromorphic computing

Magnetic skyrmions, topologically protected, particle-like spin textures, are highly promising candidates for energy efficient spintronic devices, particularly as artificial synapses in neuromorphic hardware [1-3]. Their key advantages include full electrical controllability, non-volatility and stability at room temperature, and the ability to represent synaptic weights through the countable number of skyrmions confined at a specific location in a device. Because skyrmions behave as discrete entities that can be nucleated, moved, summed, and electrically read, they offer a natural physical substrate for implementing weighted-sum operations central to neural-network computation [3].

This project aims to demonstrate such synaptic behaviour in micrometer-scale devices patterned in a magnetic tunnel junction (MTJ) stack. The MTJ incorporates a free CoFeB layer magnetically coupled to a Co/Ru/Pt skyrmion-hosting multilayer (MML). By enabling electrically controlled skyrmion nucleation in the MML and exploiting the magnetic imprint transferred to the CoFeB free layer, the device can encode and read non-volatile synaptic weights through tunnel magnetoresistance.

Micromagnetic simulations are used to model the full stack, skyrmionic multilayer, spacer, and MTJ free layer—and are used to optimise material thicknesses and multilayer repetition to obtain stable skyrmions with desired diameters and strong imprint onto the free CoFeB layer for efficient readout. For experimental validation, we fabricate MTJs integrating the skyrmionic free layer and demonstrate electrical nucleation and synaptic-like weight updates. Building on this concept, we aim to design small arrays of MTJs capable of performing weighted-sum operations using multiple skyrmion-based synapses.

Together, the results establish a clear route toward electrically operated, skyrmion-based synaptic elements and scalable spintronic neuromorphic hardware.

[1] J. Grollier et al., Nature Electronics 3 (2020).

[2] K. M. Song et al., Nature Electronics 3 (2020).

[3] T. da Câmara Santa Clara Gomes et al., Nature Electronics 8 (2025). -

35

Multi-Level Magnetic Tunnel Junctions

The continuous evolution of computing architectures and the rapid growth of data generation have intensified the demand for non-volatile memory technologies that combine high density, low power consumption, and fast operation. In this context, magnetoresistive random-access memory (MRAM), based on magnetic tunnel junctions (MTJs), has emerged as a promising candidate. However, conventional MTJs are inherently limited to two resistance states, restricting information storage to a single bit per cell.

This work focuses on the development and optimization of multi-level MTJs capable of exhibiting more than two stable resistance states, enabling multi-bit storage within a single memory cell. In particular, a shape-anisotropy-based approach is explored, employing a free layer composed of two crossing ellipses (TCE) that can stabilize four distinct remanent magnetic states, combined with a single-ellipse fixed layer. Writing is achieved through spin–orbit torque (SOT), which allows physical separation between write and read paths, reducing electrical stress on the tunnel barrier.

The theoretical foundations of MTJs and the main SOT mechanisms are reviewed, followed by the presentation of a fabrication process compatible with microfabrication at INESC-MN, structured into four lithography levels. Furthermore, micromagnetic simulations performed using MuMax3 demonstrate the stabilization of four remanent states in the TCE free layer and the corresponding normalized MTJ electrical readout.

The results confirm the potential of shape-anisotropy-based multi-level MTJs as key building blocks for high-density non-volatile memories and emerging neuromorphic computing architectures. This work establishes a solid foundation for future experimental fabrication, electrical characterization, and further device optimization.

-

36

$Ga_2O_3$ Thin Films for Integrated Photonics through Doping and Ion Implantation

Gallium oxide ($Ga_2O_3$) is a wide-bandgap semiconductor that has attracted significant attention for optoelectronic and photonic applications. Recent studies suggest that it is possible to tune both its bandgap and refraction index with aluminum incorporation, making it an even more versatile material platform for next-generation broadband integrated photonic devices. This thesis aims to make use of this tunability to enable local refractive index engineering for photonic applications, particularly as optical waveguides, via irradiation and ion implantation. This involves the fabrication, modification and optical characterization of $Ga_2O_3$ thin films to achieve better control over their optical properties.

The thin films are fabricated using radio-frequency magnetron sputtering (RFMS) under varied deposition conditions with controlled aluminum incorporation. Post-deposition annealing is used to modify their optical properties, while ion implantation and irradiation will be investigated as techniques for local modification of the refractive index. Characterization methods, including ellipsometry, optical absorption spectroscopy, Rutherford backscattering spectrometry (RBS), X-ray diffraction (XRD) and scanning electron microscopy (SEM) are used to evaluate the film’s optical response, composition and structure. Preliminary experimental results confirm the modification of the films’ optical properties through doping and different deposition conditions, with changes observed in refractive index and bandgap energy.

-

37

Controlled Modification of Ga₂O₃ Interfaces for Photovoltaic and Photoconductive UV Detectors

Gallium Oxide (Ga₂O₃) is an ultra-wide bandgap semiconductor with a strong potential for UV “solar-blind” photodetectors. The objective of this work is to investigate the controlled modification of Ga₂O₃/metal interfaces inserted in a metal-semiconductor-metal (MSM) structure by ion implantation and thermal annealing. The control of the electrical contacts allows MSM photodetectors to operate in different modes, photovoltaic and photoconductive, depending if contacts are Ohmic or Schottky.

Ga₂O₃ thin films will be deposited by RF magnetron sputtering and the effect of different values of power, pressure and deposition time will be studied. Doping of these films with Sn donors during growth or by ion implantation will also be investigated. Subsequently, the previously mentioned contacts will be made by using different metals (Ni, Ti and Pt) deposited on the semiconductor by magnetron sputtering. Then, post-deposition treatment processes, such as thermal annealing are applied in order to modify the electrical, optical and structural properties of the MS interfaces. These properties will be characterized by different experimental techniques and the final device performance will be assessed by the measurement of variables associated with the photodetectors such as (responsivity, detectivity, external quantum efficiency, photo-to-dark current ratio and the response time). -

38

TMR-Based Angular Magnetoresistive Sensors

This integrated project establishes a comprehensive methodology for developing high-accuracy angular position sensors based on Tunnel Magnetoresistance (TMR) technology. By employing a Stoner-Wohlfarth macrospin model, the magnetic behavior of multilayer stacks is simulated to account for anisotropies and interlayer couplings, enabling performance prediction and design optimization. A Wheatstone bridge configuration with orthogonal reference layers is implemented to suppress harmonic distortions and generate orthogonal sine-cosine signals, facilitating full 360° angle reconstruction. The sensors are fabricated using a dedicated microfabrication process, including magnetron sputtering, lithography, and thermal annealing. Experimental characterization with a six-probe measurement system validates the design, demonstrating sinusoidal bridge outputs and a reconstructed angular error consistent with simulation trends. The work confirms TMR sensors as a robust platform for precision angular sensing and provides a validated framework for future performance enhancement and application-specific development.

-

39

Multi-Higgs Doublet Models and Softly-Broken Symmetries

Despite the successes of the Standard Model (SM), several fundamental questions remain unanswered. Motivated by this, extensions such as Multi-Higgs Doublet Models (NHDMs) have been proposed. NHDMs enlarge the scalar sector of the SM, leading to a rapid growth of the parameter space, which makes the imposition of symmetries particularly important.

This work focuses on Two- and Three-Higgs Doublet Models (2HDMs and 3HDMs, respectively), constrained by discrete and continuous symmetries, either exact or softly broken, and on the stability of these constraints under renormalisation group (RG) evolution. The analysis resulted in the derivation of the one-loop β functions for the most general 3HDM and to the identification of a novel 3HDM constrained by a GOOFy symmetry. -

40

Decoding Neutrino Interactions: A Model-Independent Approach with DUNE-PRISM

Precision measurements of neutrino oscillations at DUNE are limited by systematic uncertainties associated with neutrino--nucleus interaction modelling. The DUNE-PRISM programme addresses this challenge by exploiting off-axis measurements at the Near Detector to reconstruct neutrino fluxes in a largely model-independent way. In this work, we implement and validate a DUNE-PRISM flux-matching framework in which linear combinations of off-axis $\nu_\mu$ fluxes are optimised to reproduce target $\nu_e$ spectra,

\begin{equation}

\Phi_{\nu_e}(E) \simeq \sum_j c_j \, \Phi_{\nu_\mu,j}(E).

\end{equation}

The coefficients $c_j$ are determined through a combined optimisation of interpolation quality and total uncertainty, with Tikhonov regularisation applied to stabilise the solutions. The resulting coefficients are propagated to Monte Carlo event rates to extract estimators of the cross-section ratio $\sigma_{\nu_\mu}/\sigma_{\nu_e}$ and the corresponding neutrino--antineutrino double ratio. Detector effects are included via forward folding to reconstructed energy. The results demonstrate that DUNE-PRISM provides a robust and quantitatively controlled method for reducing flux and cross-section systematics, enhancing DUNE's sensitivity to CP violation. -

41

Electroweak Phase Transitions Impact on Dark Matter

This work explores how the timing and structure of electroweak symmetry breaking (EWSB) affects the freeze-out process of dark matter (DM) in the early Universe. While standard relic density calculations assume that EWSB has already occurred, recent studies show that for sufficiently heavy DM candidates, freeze-out may take place in the symmetric phase, before the Higgs field acquires a vacuum expectation value. In this regime, particle masses and interactions differ significantly, leading to large deviations in the predicted DM relic abundance.

After reviewing the mechanism of EWSB within the Standard Model and the astrophysical evidence for dark matter, this project presents recent work introducing a phase-aware approach to relic density calculations, in which the Boltzmann equation is solved with temperature-dependent inputs corresponding to the correct electroweak phase. This refined treatment highlights the limitations of the standard approach, especially for heavy DM candidates.

Looking ahead, this project aims to implement at least one model with a two-step EWSB including three distinct stages in the early Universe, where multiple scalar fields acquire vacuum expectation values at different times. The goal is to implement multi-phase freeze-out in micrOMEGAs, enabling more accurate predictions for dark matter abundance in extended models.

-

42

Machine Learning techniques to explore scalar-fermion couplings

The couplings of the $125\,\textrm{GeV}$ Higgs are being measured with higher precision as the Run 3 stage of LHC continues. Models with multiple Higgs doublets allow potential deviations from the SM predictions. For more than two doublets, there are five possible types of models that avoid flavor changing neutralcouplings at tree level by the addition of a symmetry.We consider a softly broken $\mathbb{Z}_2\times\mathbb{Z}_2$ three-Higgs doublet model with explicit CP violation in the scalar sector, exploring all five possible types of coupling choices and all five mass orderings of the neutral scalar bosons. The phenomenological study is performed using a Machine Learning black box optimization algorithm that efficiently searches for the possibility of large pseudoscalar Yukawa couplings. We identify the model choices that allow a purely pseudoscalar coupling in light of all recent experimental limits, including direct searches for CP-violation, thus motivating increased effort into improving the experimental precision. An article with these results has been sent for publication.

-

43

Yukawa Couplings in 3HDM

We study a three-Higgs-doublet extension of the Standard Model invariant under a generalized CP (GCP) transformation and focus on the specific GCP-realization labelled by ($\theta ,\alpha,\beta,\gamma$)=($\pi/3,\pi/3,\pi/3,\pi/3$). This model — a natural 3HDM continuation of a previously identified non-trivial GCP-invariant 2HDM — belongs to the CPc class of scalar potentials and yields a total of 22 free parameters (four from the scalar-field parametrization and eighteen from the Yukawa matrices). We classify the allowed Yukawa textures for this choice of GCP, construct the full Lagrangian, and perform a global numerical fit to the quark-sector data (quark masses and CKM matrix elements, including CP violation as measured by the Jarlskog invariant). We find that the $\pi/3$ 3HDM provides an excellent fit to the measured quark masses and CKM observables while maintaining non-degenerate, non-zero masses and a non-vanishing Jarlskog invariant. The fit reduces the effective parameter freedom and leads to characteristic correlations among Yukawa entries and between fermion and scalar sector observables; these give rise to concrete, testable predictions for the scalar spectrum and for flavor-changing observables. We discuss the phenomenological implications and avenues for experimental discrimination in future flavor and collider studies.

-

44

Light scalars in the triplet seesaw model

The Standard Model (SM) is the most successful theory in physics, accurately describing the electromagnetic, weak, and strong interactions of fundamental particles. However, it fails to explain some observed phenomena, such as neutrino oscillations/masses, leptonic charge-parity (CP) violation and mixing patterns, i.e., the neutrino flavor puzzle.

In this work, we explore an extension of the SM via the two-scalar-triplet model. This framework naturally generates small neutrino masses through the Type-II seesaw mechanism and gives rise to leptonic CP violation through spontaneous CP violation. Furthermore, it addresses the neutrino flavor puzzle by accommodating two texture zeros in the neutrino mass matrix, which are enforced by softly broken Abelian symmetries. Finally, we present a numerical analysis of the model’s predictions for the non-decoupled scalars and evaluate the compatibility of the texture zeros with experimental data.

-

12:15

Lunch Break

-

45

Generation of Longitudinal MRI and PET Data in Patients with Alzheimer’s Disease

Alzheimer’s disease (AD) is an irreversible neurodegenerative disease whose clinical management and research heavily rely on longitudinal neuroimaging, particularly magnetic resonance imaging (MRI) and positron emission tomography (PET). However, real-world clinical datasets are frequently affected by missing imaging time points due to patient dropout, irregular follow-ups, or logistical constraints, limiting the ability of predictive models to accurately capture disease trajectories. This work addresses the problem of missing longitudinal neuroimaging data by developing a generative model capable of synthesizing realistic and biologically plausible MRI and PET images conditioned on available patient information.

The proposed approach explores state-of-the-art generative techniques, with a focus on diffusion-based models. A baseline Denoising Diffusion Probabilistic Model (DDPM) will be first implemented to generate unconditional brain images. This will be then extended to a conditional Stable Diffusion framework that enables the generation of missing MRI or PET scans by conditioning on multimodal clinical information, including cognitive test scores, biomarkers, and prior imaging data. To further enforce anatomical consistency and preserve patient-specific structural characteristics, segmentation-guided diffusion will be incorporated, conditioning the generation process on brain masks.

The models are trained and evaluated using large-scale longitudinal datasets from the Alzheimer’s Disease Neuroimaging Initiative (ADNI) and the OASIS-3. Performance is assessed through a comprehensive evaluation framework combining image quality metrics, such as MSE, SSIM, and Fréchet Inception Distance, with biologically informed criteria that examine anatomical fidelity and consistency with known patterns of Alzheimer’s disease progression. -

46

Correlated Imaging and Analysis of Single Cells: A Biophysical Perspective on Advanced Fluorescence Microscopy

Fluorescence microscopy enables molecularly specific imaging in living cells using fluorescent labels that report the location and behaviour of selected targets. Widefield imaging is fast and simple, but it suffers from out-of-focus blur and background, especially in thicker samples.

In this report, these limitations are addressed by advanced fluorescence imaging techniques, such as confocal microscopy, which improves contrast through optical sectioning, and two-photon excitation, which confines excitation of fluorophores to the focal volume and supports deeper imaging in scattering tissue; both approaches are discussed in terms of their trade-offs in speed, signal, and phototoxicity. Super-resolution is discussed with a focus on stimulated emission depletion (STED), where a red-shifted, donut-shaped depletion beam suppresses fluorescence around the focal centre to achieve sub-diffraction resolution. Key practical limits in live cells include light dose, fluorophore photostability, labelling density and calibration. Multicolour fluorescence imaging is used to compare multiple targets within the same cell and to assess spatial overlap (co-localisation) between channels.

Dynamic imaging adds time-resolved information. FRAP/FLIP quantify mobility and exchange via recovery after photobleaching, while FRET and FLIM (including FLIM-FRET) probe nanometre-scale proximity and interaction dynamics. Overall, methods should be chosen according to the biological question and the required spatial–temporal scale, aiming to maximize information while minimizing perturbation of living cells. -

47

Detection of Biosignatures and Organic Compounds in Icy Moons using spectral features

Icy moons such as Enceladus represent prime targets for detecting life beyond Earth due to their subsurface oceans and active plume emissions enabling direct sampling. This work develops a multi-instrument methodology to analyse Cassini VIMS and INMS data, characterising organic compounds and assessing biosignature potential. VIMS analysis reveals systematic differences between tiger stripe fractures and nearby terrain, with organic C-H features and CO$_2$ ice indicating subsurface volatile delivery. Crystalline ice detection requires formation temperatures exceeding 130 K. INMS reveals water dominance (79.30\%) with high molecular diversity, including ammonia, radiogenic $^{40}$Ar, and benzene, supporting an active ocean with water-rock interactions. The methodologies align well with established approaches, validating the analysis framework. However, Cassini's instrumental limitations prevent definitive biosignature detection. The combined evidence establishes Enceladus as a prime astrobiology target with conditions analogous to Earth's hydrothermal vents, providing a validated framework for future ocean world exploration.

-

48

Predicting Commodity Turning Points with Mixed Causal–Noncausal Models and Exogenous News

Commodities play a pivotal role in the global economy and, like other financial assets, are susceptible to speculative bubbles. These speculative episodes, marked by sharp price rises followed by abrupt corrections, can generate severe financial instability. Standard causal time-series models are ill-suited for capturing these speculative dynamics. However, mixed causal–noncausal autoregressive (MAR) models have recently emerged as a promising alternative, combining backward-looking (causal) dynamics with forward-looking (noncausal) components, and thereby enabling the modeling of anticipatory behavior and bubble-like episodes. This thesis further develops and empirically tests MAR models for forecasting turning points and crash risk in commodity prices.

The core contribution is the introduction of a MAR framework augmented with exogenous news variables, constructed from macroeconomic and sector-specific news indices as well as text-based signals. This extended MAR model aims to improve early warning signals for price reversals, disentangle surprise-driven from anticipatory effects, and assess how news propagates through causal and noncausal channels. Methodologically, the project estimates MAR benchmarks under non-Gaussian errors, employs likelihood-based identification, and evaluates predictive gains in crash and regime-switch forecasting across major commodity markets (oil, metals, and agricultural products). Overall, this thesis aims to deliver practical insights for policy analysts on integrating news into forward-looking time-series models.

-

49

Evaluating the Potential of Radiosensitizers to Enhance the Effectiveness of Radiation Therapy

Radiation Therapy is a central modality in cancer treatment, but its lack of cellular selectivity often results in damage to surrounding healthy tissues. Radiosensitization has emerged as a promising strategy to enhance tumour response while minimizing toxicity.

In this context, several studies explore gold-based radiosensitizers, such as gold nanoparticles (AuNPs) in MCF-7 cells and gold-coated nanodiamonds (NDAus) in A549 cells, showing increased local dose deposition and DNA damage in tumour cells.

This work aims to evaluate the potential of confocal microscopy for three-dimensional quantification of DNA double-strand breaks and their repair, using Fiji software for automated image analysis, in order to compare this 3D approach with conventional 2D methods. Three-dimensional analysis will support the discussion of confocal microscopy, together with Fiji, as a robust tool to study radiosensitization effects. In addition, the use of NDAus in other tumour cell lines will be investigated, broadening the assessment of radiosensitizers. -

50

Data-driven Fault Detection, Isolation, and Recovery (FDIR) for spacecraft

Modern spacecraft operate in harsh environments, where on-board intervention by engineers is not possible, making autonomy a critical requirement for mission success. Fault Detection, Isolation and Recovery (FDIR) plays a critical role in ensuring spacecraft safety and operational continuity, yet traditional FDIR approaches struggle to scale with the increasing complexity and interconnectivity of modern space systems. Machine learning techniques have shown strong performance in anomaly detection, fault isolation and recovery, but due to fault propagation these tasks remain challenging.

This work proposes a data-driven FDIR framework that uses machine learning models to detect anomalies in spacecraft data and isolates failures with the help of directed dependency graphs that describe dependency relationships between system components. Through these graphs, we aim to explicitly model how faults propagate through a system, creating a framework that can autonomously tell where each fault originated. Building on this framework, fault recovery decisions based on machine learning strategies, such as reinforcement learning, should aim to mitigate faults with minimal impact on mission objectives.

By combining machine learning with structured system knowledge, this work aims to improve fault isolation accuracy and recovery efficiency, contributing toward more robust, autonomous, and resilient spacecraft fault management operations. -

51

Glint Model for New Diagnostic for the National Ignition Facility

Understanding the X-ray drive deficit in inertial confinement fusion is crucial to realizing fusion as a viable energy source. To this end, the National Ignition Facility (NIF) has performed proof-of-concept experiments for a new post-shot transmitted-beam diagnostic, which requires improved understanding of laser glint and thermal filamentation. In this work, we develop a theoretical model of laser glint from oblique reflection off an expanding plasma and compare it to two NIF proof-of-concept experiments. We describe laser propagation and reflection in a collisional plasma, as well as the inverse Bremsstrahlung absorption mechanism. We model the plasma using a self-similar isothermal expansion. Ionization scaling laws are derived from simulations performed at Lawrence Livermore National Laboratory, and corrections to the electron-ion collision rate due to the Langdon effect are included. The Coulomb logarithm is adapted to accurately describe inverse Bremsstrahlung absorption in the relevant regime. The model reproduces the observed qualitative behavior of glint decay while highlighting discrepancies that point to missing physics, such as radiative losses and thermal filamentation. Finally, we outline a numerical pathway for studying glint and thermal filamentation from first principles using particle-in-cell simulations, and present preliminary results on inverse Bremsstrahlung absorption.

-

52

Simulation Tool Developments for Extreme Laser-Matter Interactions

As laser intensities exceed $10^{21}$ $W cm^{-2}$ plasma dynamics become dominated by Strong-Field Quantum Electrodynamics (SFQED), which require sophisticated simulation tools to model phenomena like gamma-ray emission and quantum radiation reaction. This work presents a C++ benchmark code developed to implement the Locally Monochromatic Approximation (LMA) within the particle-in-cell framework OSIRIS. Unlike the standard Local Constant Field Approximation (LCFA), already implemented in OSIRIS, LMA accounts for the harmonic structure of the fields at lower intensities while remaining valid in the ultrarelativistic regime.

The framework supports flexible particle and field initialization and is designed with parallelisation in mind for high-performance computing environments. Validation tests against analytical solutions for cyclotron motion and laser-pulse interactions have been successfully performed up to the implementation of LCFA, and are in perfect aggreement with the theoretical predictions. This code provides a foundation for future LMA-based SFQED studies and contributes toward realistic modeling of upcoming high-intensity laser–plasma experiments, such as those at SLAC. -

53

Modelling homogeneous DBD for CO2 conversion

A sustainable society requires the recycling of greenhouse gases into useful products (e.g. CO2 into CO and O2). For this conversion process, low-temperature plasmas are essential. These are electrically-powered reactive gases with ideal conditions for gaseous conversion. Atmospheric pressure plasmas in particular have great potential for conversion of CO2, due to the higher throughput at higher pressures. Nevertheless, atmospheric pressure plasmas are usually filamentary, transient and irreproducible, which renders them difficult to study and optimize. The recent discovery of CO2 homogeneous Dielectric Barrier Discharges (DBDs) provides an opportunity to study fundamental processes for CO2 plasma conversion at atmospheric pressure.

The physics of low-temperature plasmas in homogeneous DBDs comes across different temporal and spatial scales and involves the interplay between different branches of physics: electromagnetism, fluid mechanics, statistical physics and gaseous and surface reactivities. In particular, homogeneous DBDs include a time-dependent applied voltage, space-charge sheaths and the charging of dielectric surfaces. An accurate description of these media that allows predictive modelling and reactor optimization requires the development of multidimensional time-dependent numerical models. The goal of this work is to engage in multidimensional continuum simulations of low-temperature plasmas and their interaction with dielectric surfaces.

The project will be supervised by plasma modelers, within the cadre of wider projects developing technologies for sustainable gaseous conversion, such as Project SYAMESE - Synergy between plasmas and separation membranes for sustainable CO2 conversion, involving plasma reactor experiments. -

54

Modelling of Corona Discharges in Humid Air

Corona discharges consist of a fundamental plasma phenomenon that plays a significant role in high-voltage transmission and can appear naturally in atmospheric electricity. The range of applications associated includes environmental and industrial plasma systems, especially under atmospheric conditions.

Humidity induces a strong influence on the discharge behavior, affecting its formation, evolution and stability and the performance of devices that rely on it. Many mechanisms and events involved remain poorly understood, especially in complex environments. Therefore, the accurate modelling of corona discharges in humid air is the focus of this work.

The addition of water vapor molecules in the already complex kinetic processes of corona discharges enhances the deviation between the predicted and observed behavior. Besides increasing the attachment coefficient of the mixture of gases and altering the inception voltage, field and other parameters, it creates small droplets with low ionization potential. The formation of ion hydrates produces clusters which modify the charge distribution in space and may alter the local electric field.

The evolution of this research includes an initial approach based on dry-air to build upon where all the necessary continuity and transport equations for charged species coupled with Poisson’s equation for electric potential will be added under varying humidity levels. Future findings are both scientifically significant and technologically essential. The understanding of the underlying physics becomes critical in the design, usage and application of coronas in order to achieve optimized plasma-based technologies that can be safely operated.

-

15:45